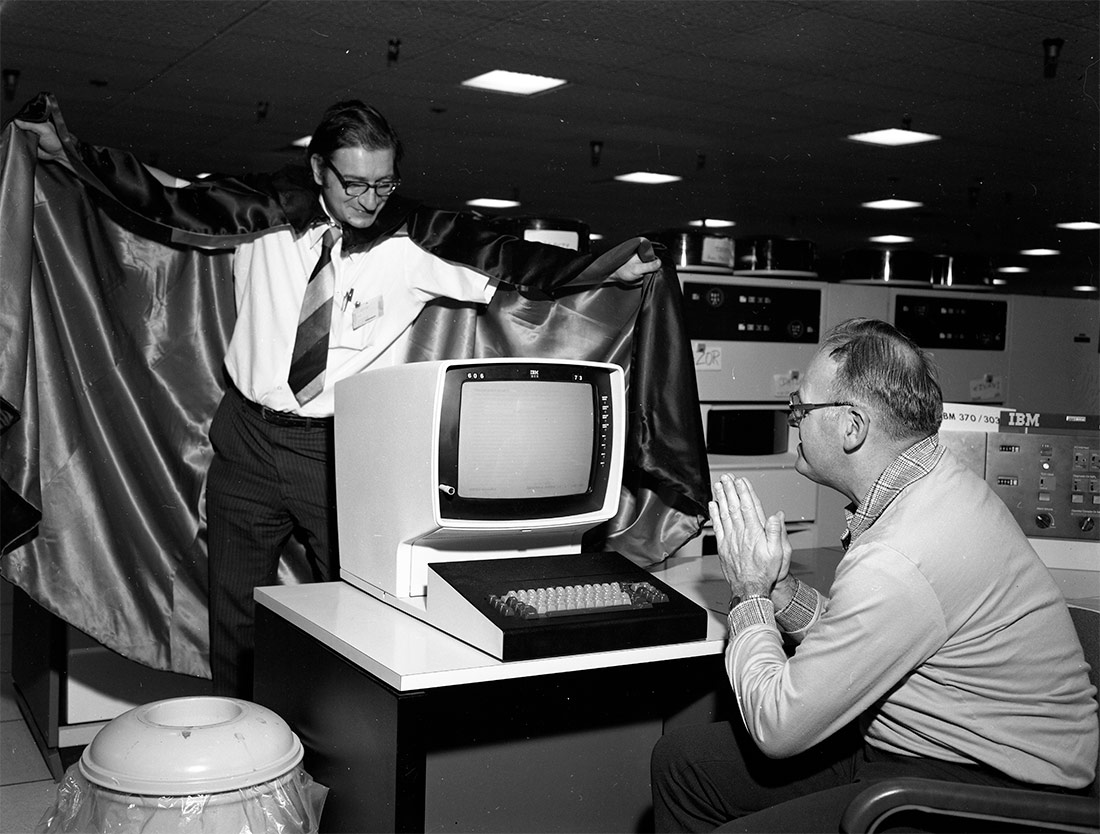

Computer with person dressed as Dracula. 1980 | John Marton, The U.S. National Archives | No known copyright restrictions

Jia Tolentino started surfing the Internet with Geocities, forums and GIFs. Years later, the battle between social media networks all vying for our constant attention has completely changed the scenario. In this advance excerpt from Falso espejo. Reflexiones sobre el autoengaño, courtesy of Temas de Hoy, Tolentino reviews this evolution to understand how the Internet ecosystem conditions our lives on and outside of the Internet.

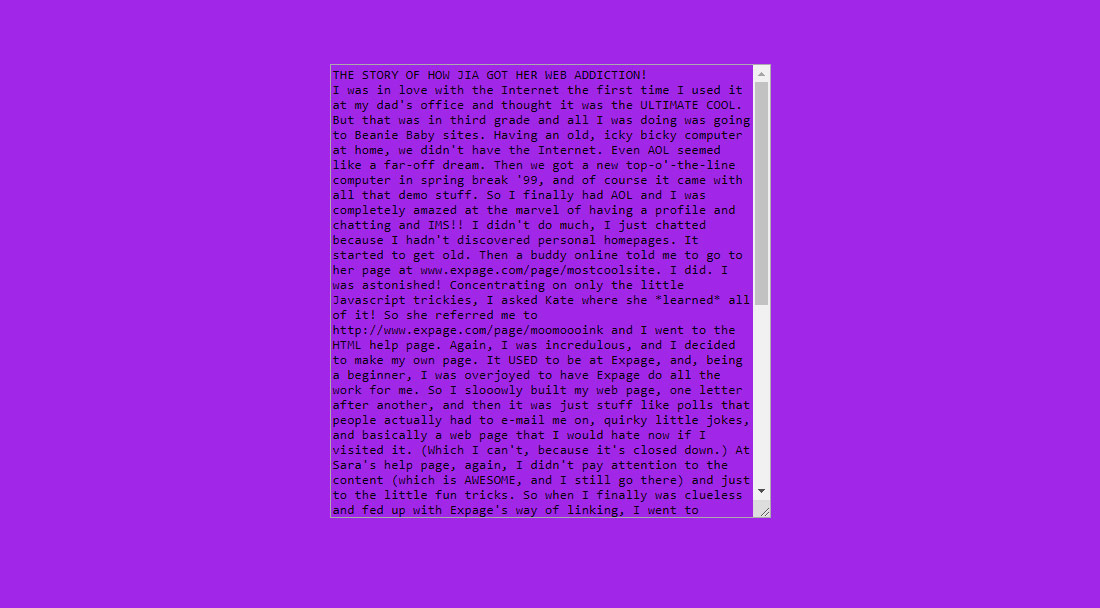

In the beginning the internet seemed good. “I was in love with the internet the first time I used it at my dad’s office and thought it was the ULTIMATE COOL,” I wrote, when I was ten, on an Angelfire subpage titled “The Story of How Jia Got Her Web Addiction.” In a text box superimposed on a hideous violet background, I continued:

But that was in third grade and all I was doing was going to Beanie Baby sites. Having an old, icky bicky computer at home, we didn’t have the Internet. Even AOL seemed like a far-off dream. Then we got a new top-o’-the-line computer in spring break ’99, and of course it came with all that demo stuff. So I finally had AOL and I was completely amazed at the marvel of having a profile and chatting and IMS!!

Then, I wrote, I discovered personal webpages. (“I was astonished!”) I learned HTML and “little Javascript trickies.” I built my own site on the beginner-hosting site Expage, choosing pastel colors and then switching to a “starry night theme.” Then I ran out of space, so I “decided to move to Angelfire. Wow.” I learned how to make my own graphics. “This was all in the course of four months,” I wrote, marveling at how quickly my ten-year-old internet citizenry was evolving. I had recently revisited the sites that had once inspired me, and realized “how much of an idiot I was to be wowed by that.”

I have no memory of inadvertently starting this essay two decades ago, or of making this Angelfire subpage, which I found while hunting for early traces of myself on the internet. It’s now eroded to its skeleton: its landing page, titled “THE VERY BEST,” features a sepia-toned photo of Andie from Dawson’s Creek and a dead link to a new site called “THE FROSTED FIELD,” which is “BETTER!” There’s a page dedicated to a blinking mouse GIF named Susie, and a “Cool Lyrics Page” with a scrolling banner and the lyrics to Smash Mouth’s “All Star,” Shania Twain’s “Man! I Feel Like a Woman!” and the TLC diss track “No Pigeons,” by Sporty Thievz. On an FAQ page – there was an FAQ page – I write that I had to close down my customizable cartoon-doll section, as “the response has been enormous.”

It appears that I built and used this Angelfire site over just a few months in 1999, immediately after my parents got a computer. My insane FAQ page specifies that the site was started in June, and a page titled “Journal” – which proclaims, “I am going to be completely honest about my life, although I won’t go too deeply into personal thoughts, though” – features entries only from October. One entry begins: “It’s so HOT outside and I can’t count the times acorns have fallen on my head, maybe from exhaustion.” Later on, I write, rather prophetically: “I’m going insane! I literally am addicted to the web!”

“The Story of How Jia Got Her Web Addiction” | Jia Tolentino

In 1999, it felt different to spend all day on the internet. This was true for everyone, not just for ten-year-olds: this was the You’ve Got Mail era, when it seemed that the very worst thing that could happen online was that you might fall in love with your business rival. Throughout the eighties and nineties, people had been gathering on the internet in open forums, drawn, like butterflies, to the puddles and blossoms of other people’s curiosity and expertise. Self-regulated newsgroups like Usenet cultivated lively and relatively civil discussion about space exploration, meteorology, recipes, rare albums. Users gave advice, answered questions, made friendships, and wondered what this new internet would become.

Because there were so few search engines and no centralized social platforms, discovery on the early internet took place mainly in private, and pleasure existed as its own solitary reward. A 1995 book called You Can Surf the Net! listed sites where you could read movie reviews or learn about martial arts. It urged readers to follow basic etiquette (don’t use all caps; don’t waste other people’s expensive bandwidth with overly long posts) and encouraged them to feel comfortable in this new world (“Don’t worry,” the author advised. “You have to really mess up to get flamed.”). Around this time, GeoCities began offering personal website hosting for dads who wanted to put up their own golfing sites or kids who built glittery, blinking shrines to Tolkien or Ricky Martin or unicorns, most capped off with a primitive guest book and a green-and-black visitor counter. GeoCities, like the internet itself, was clumsy, ugly, only half functional, and organized into neighborhoods: /area51/ was for sci-fi, /westhollywood/ for LGBTQ life, /enchantedforest/ for children, /petsburgh/ for pets. If you left GeoCities, you could walk around other streets in this ever-expanding village of curiosities. You could stroll through Expage or Angelfire, as I did, and pause on the thoroughfare where the tiny cartoon hamsters danced. There was an emergent aesthetic – blinking text, crude animation. If you found something you liked, if you wanted to spend more time in any of these neighborhoods, you could build your own house from HTML frames and start decorating.

This period of the internet has been labeled Web 1.0 – a name that works backward from the term Web 2.0, which was coined by the writer and user experience designer Darcy DiNucci in an article called “Fragmented Future,” published in 1999. “The Web we know now,” she wrote, “which loads into a browser window in essentially static screenfuls, is only an embryo of the Web to come. The first glimmerings of Web 2.0 are beginning to appear. . . . The Web will be understood not as screenfuls of texts and graphics but as a transport mechanism, the ether through which interactivity happens.” On Web 2.0, the structures would be dynamic, she predicted: instead of houses, websites would be portals, through which an ever-changing stream of activity – status updates, photos – could be displayed. What you did on the internet would become intertwined with what everyone else did, and the things other people liked would become the things that you would see. Web 2.0 platforms like Blogger and Myspace made it possible for people who had merely been taking in the sights to start generating their own personalized and constantly changing scenery. As more people began to register their existence digitally, a pastime turned into an imperative: you had to register yourself digitally to exist.

In a New Yorker piece from November 2000, Rebecca Mead profiled Meg Hourihan, an early blogger who went by Megnut. In just the prior eighteen months, Mead observed, the number of “weblogs” had gone from fifty to several thousand, and blogs like Megnut were drawing thousands of visitors per day. This new internet was social (“a blog consists primarily of links to other Web sites and commentary about those links”) in a way that centered on individual identity (Megnut’s readers knew that she wished there were better fish tacos in San Francisco, and that she was a feminist, and that she was close with her mom). The blogosphere was also full of mutual transactions, which tended to echo and escalate. The “main audience for blogs is other bloggers,” Mead wrote. Etiquette required that, “if someone blogs your blog, you blog his blog back.”

Through the emergence of blogging, personal lives were becoming public domain, and social incentives – to be liked, to be seen – were becoming economic ones. The mechanisms of internet exposure began to seem like a viable foundation for a career. Hourihan cofounded Blogger with Evan Williams, who later cofounded Twitter. JenniCam, founded in 1996 when the college student Jennifer Ringley started broadcasting webcam photos from her dorm room, attracted at one point up to four million daily visitors, some of whom paid a subscription fee for quicker loading images. The internet, in promising a potentially unlimited audience, began to seem like the natural home of self-expression. In one blog post, Megnut’s boyfriend, the blogger Jason Kottke, asked himself why he didn’t just write his thoughts down in private. “Somehow, that seems strange to me though,” he wrote. “The Web is the place for you to express your thoughts and feelings and such. To put those things elsewhere seems absurd.”

Every day, more people agreed with him. The call of self-expression turned the village of the internet into a city, which expanded at time-lapse speed, social connections bristling like neurons in every direction. At ten, I was clicking around a web ring to check out other Angelfire sites full of animal GIFs and Smash Mouth trivia. At twelve, I was writing five hundred words a day on a public LiveJournal. At fifteen, I was uploading photos of myself in a miniskirt on Myspace. By twenty-five, my job was to write things that would attract, ideally, a hundred thousand strangers per post. Now I’m thirty, and most of my life is inextricable from the internet, and its mazes of incessant forced connection – this feverish, electric, unlivable hell.

myspace.com, 2017 | Web Design Museum

As with the transition between Web 1.0 and Web 2.0, the curdling of the social internet happened slowly and then all at once. The tipping point, I’d guess, was around 2012. People were losing excitement about the internet, starting to articulate a set of new truisms. Facebook had become tedious, trivial, exhausting. Instagram seemed better, but would soon reveal its underlying function as a three-ring circus of happiness and popularity and success. Twitter, for all its discursive promise, was where everyone tweeted complaints at airlines and bitched about articles that had been commissioned to make people bitch. The dream of a better, truer self on the internet was slipping away. Where we had once been free to be ourselves online, we were now chained to ourselves online, and this made us self-conscious. Platforms that promised connection began inducing mass alienation. The freedom promised by the internet started to seem like something whose greatest potential lay in the realm of misuse.

Even as we became increasingly sad and ugly on the internet, the mirage of the better online self-continued to glimmer. As a medium, the internet is defined by a built-in performance incentive. In real life, you can walk around living life and be visible to other people. But you can’t just walk around and be visible on the internet – for anyone to see you, you have to act. You have to communicate in order to maintain an internet presence. And, because the internet’s central platforms are built around personal profiles, it can seem – first at a mechanical level, and later on as an encoded instinct – like the main purpose of this communication is to make yourself look good. Online reward mechanisms beg to substitute for offline ones, and then overtake them. This is why everyone tries to look so hot and well-traveled on Instagram; this is why everyone seems so smug and triumphant on Facebook; this is why, on Twitter, making a righteous political statement has come to seem, for many people, like a political good in itself.

This practice is often called “virtue signaling,” a term most often used by conservatives criticizing the left. But virtue signaling is a bipartisan, even apolitical action. Twitter is overrun with dramatic pledges of allegiance to the Second Amendment that function as intra-right virtue signaling, and it can be something like virtue signaling when people post the suicide hotline after a celebrity death. Few of us are totally immune to the practice, as it intersects with real desire for political integrity. Posting photos from a protest against border family separation, as I did while writing this, is a microscopically meaningful action, an expression of genuine principle, and also, inescapably, some sort of attempt to signal that I am good.

Taken to its extreme, virtue signaling has driven people on the left to some truly unhinged behavior. A legendary case occurred in June 2016, after a two- year- old was killed at a Disney resort – dragged off by an alligator while playing in a no- swimming- allowed lagoon. A woman, who had accumulated ten thousand Twitter followers with her posts about social justice, saw an opportunity and tweeted, magnificently, “I’m so finished with white men’s entitlement lately that I’m really not sad about a 2yo being eaten by a gator because his daddy ignored signs.” (She was then pilloried by people who chose to demonstrate their own moral superiority through mockery – as I am doing here, too.) A similar tweet made the rounds in early 2018 after a sweet story went viral: a large white seabird named Nigel had died next to the concrete decoy bird to whom he had devoted himself for years. An outraged writer tweeted, “Even concrete birds do not owe you affection, Nigel,” and wrote a long Facebook post arguing that Nigel’s courtship of the fake bird exemplified… rape culture. “I’m available to write the feminist perspective on Nigel the gannet’s non- tragic death should anyone wish to pay me,” she added, underneath the original tweet, which received more than a thou-sand likes. These deranged takes, and their unnerving proximity to online monetization, are case studies in the way that our world – digitally mediated, utterly consumed by capitalism – makes communication about morality very easy but makes actual moral living very hard. You don’t end up using a news story about a dead toddler as a peg for white entitlement without a society in which the discourse of righteousness occupies far more public attention than the conditions that necessitate righteousness in the first place.

On the right, the online performance of political identity has been even wilder. In 2017, the social- media- savvy youth conservative group Turning Point USA staged a protest at Kent State University featuring a student who put on a diaper to demonstrate that “safe spaces were for babies.” (It went viral, as intended, but not in the way TPUSA wanted – the protest was uniformly roasted, with one Twitter user slapping the logo of the porn site Brazzers on a photo of the diaper boy, and the Kent State TPUSA campus coordinator resigned.) It has also been infinitely more consequential, beginning in 2014, with a campaign that became a template for right-wing internet-political action, when a large group of young misogynists came together in the event now known as Gamergate.

The issue at hand was, ostensibly, a female game designer perceived to be sleeping with a journalist for favorable coverage. She, along with a set of feminist game critics and writers, received an onslaught of rape threats, death threats, and other forms of harassment, all concealed under the banner of free speech and “ethics in games journalism.” The Gamergaters – estimated by Deadspin to number around ten thousand people – would mostly deny this harassment, either parroting in bad faith or fooling themselves into believing the argument that Gamergate was actually about noble ideals. Gawker Media, Deadspin’s parent company, itself became a target, in part because of its own aggressive disdain toward the Gamergaters: the company lost seven figures in revenue after its advertisers were brought into the maelstrom.

In 2016, a similar fiasco made national news in Pizzagate, after a few rabid internet denizens decided they’d found coded messages about child sex slavery in the advertising of a pizza shop associated with Hillary Clinton’s campaign. This theory was disseminated all over the far-right internet, leading to an extended attack on DC’s Comet Ping Pong pizzeria and everyone associated with the restaurant – all in the name of combating pedophilia – that culminated in a man walking into Comet Ping Pong and firing a gun. (Later on, the same faction would jump to the defense of Roy Moore, the Republican nominee for the Senate who was accused of sexually assaulting teenagers.) The over-woke left could only dream of this ability to weaponize a sense of righteousness. Even the militant antifascist movement, known as antifa, is routinely disowned by liberal centrists, despite the fact that the antifa movement is rooted in a long European tradition of Nazi resistance rather than a nascent constellation of radically paranoid message boards and YouTube channels. The worldview of the Gamergaters and Pizzagaters was actualized and to a large extent vindicated in the 2016 election – an event that strongly suggested that the worst things about the internet were now determining, rather than reflecting, the worst things about offline life.

Mass media always determines the shape of politics and culture. The Bush era is inextricable from the failures of cable news; the executive overreaches of the Obama years were obscured by the internet’s magnification of personality and performance; Trump’s rise to power is inseparable from the existence of social networks that must continually aggravate their users in order to continue making money. But lately I’ve been wondering how everything got so intimately terrible, and why, exactly, we keep playing along. How did a huge number of people begin spending the bulk of our disappearing free time in an openly torturous environment? How did the internet get so bad, so confining, so inescapably personal, so politically determinative – and why are all those questions asking the same thing?

I’ll admit that I’m not sure that this inquiry is even productive. The internet reminds us on a daily basis that it is not at all rewarding to become aware of problems that you have no reasonable hope of solving. And, more important, the internet already is what it is. It has already become the central organ of contemporary life. It has already rewired the brains of its users, returning us to a state of primitive hyperawareness and distraction while overloading us with much more sensory input than was ever possible in primitive times. It has already built an ecosystem that runs on exploiting attention and monetizing the self. Even if you avoid the internet completely – my partner does: he thought #tbt meant “truth be told” for ages – you still live in the world that this internet has created, a world in which selfhood has become capitalism’s last natural resource, a world whose terms are set by centralized platforms that have deliberately established themselves as near-impossible to regulate or control.

The internet is also in large part inextricable from life’s pleasures: our friends, our families, our communities, our pursuits of happiness, and – sometimes, if we’re lucky – our work. In part out of a desire to preserve what’s worthwhile from the decay that surrounds it, I’ve been thinking about five intersecting problems: first, how the internet is built to distend our sense of identity; second, how it encourages us to overvalue our opinions; third, how it maximizes our sense of opposition; fourth, how it cheapens our understanding of solidarity; and, finally, how it destroys our sense of scale.

In 1959, the sociologist Erving Goffman laid out a theory of identity that revolved around playacting. In every human interaction, he wrote in The Presentation of Self in Everyday Life, a person must put on a sort of performance, create an impression for an audience. The performance might be calculated, as with the man at a job interview who’s practiced every answer; it might be unconscious, as with the man who’s gone on so many interviews that he naturally performs as expected; it might be automatic, as with the man who creates the correct impression primarily because he is an upper-middle-class white man with an MBA. A performer might be fully taken in by his own performance – he might actually believe that his biggest flaw is “perfectionism” – or he might know that his act is a sham. But no matter what, he’s performing. Even if he stops trying to perform, he still has an audience, his actions still create an effect. “All the world is not, of course, a stage, but the crucial ways in which it isn’t are not easy to specify,” Goffman wrote.

To communicate an identity requires some degree of self-delusion. A performer, in order to be convincing, must conceal “the discreditable facts that he has had to learn about the performance; in everyday terms, there will be things he knows, or has known, that he will not be able to tell himself.” The interviewee, for example, avoids thinking about the fact that his biggest flaw actually involves drinking at the office. A friend sitting across from you at dinner, called to play therapist for your trivial romantic hang-ups, has to pretend to herself that she wouldn’t rather just go home and get in bed to read Barbara Pym. No audience has to be physically present for a performer to engage in this sort of selective concealment: a woman, home alone for the weekend, might scrub the baseboards and watch nature documentaries even though she’d rather trash the place, buy an eight ball, and have a Craigslist orgy. People often make faces, in private, in front of bathroom mirrors, to convince themselves of their own attractiveness. The “lively belief that an unseen audience is present,” Goffman writes, can have a significant effect.

Offline, there are forms of relief built into this process. Audiences change over – the performance you stage at a job interview is different from the one you stage at a restaurant later for a friend’s birthday, which is different from the one you stage for a partner at home. At home, you might feel as if you could stop performing altogether; within Goffman’s dramaturgical framework, you might feel as if you had made it backstage. Goffman observed that we need both an audience to witness our performances as well as a backstage area where we can relax, often in the company of “teammates” who had been performing alongside us. Think of coworkers at the bar after they’ve delivered a big sales pitch, or a bride and groom in their hotel room after the wedding reception: everyone may still be performing, but they feel at ease, unguarded, alone. Ideally, the outside audience has believed the prior performance. The wedding guests think they’ve actually just seen a pair of flawless, blissful newlyweds, and the potential backers think they’ve met a group of geniuses who are going to make everyone very rich. “But this imputation – this self – is a product of a scene that comes off, and is not a cause of it,” Goffman writes. The self is not a fixed, organic thing, but a dramatic effect that emerges from a performance. This effect can be believed or disbelieved at will.

Online – assuming you buy this framework – the system metastasizes into a wreck. The presentation of self in everyday internet still corresponds to Goffman’s playacting metaphor: there are stages, there is an audience. But the internet adds a host of other, nightmarish metaphorical structures: the mirror, the echo, the panopticon. As we move about the internet, our personal data is tracked, recorded, and resold by a series of corporations – a regime of involuntary technological surveillance, which subconsciously decreases our resistance to the practice of voluntary self-surveillance on social media. If we think about buying something, it follows us around everywhere. We can, and probably do, limit our online activity to websites that further reinforce our own sense of identity, each of us reading things written for people just like us. On social media platforms, everything we see corresponds to our conscious choices and algorithmically guided preferences, and all news and culture and interpersonal interaction are filtered through the home base of the profile. The everyday madness perpetuated by the internet is the madness of this architecture, which positions personal identity as the center of the universe. It’s as if we’ve been placed on a lookout that oversees the entire world and given a pair of binoculars that makes everything look like our own reflection. Through social media, many people have quickly come to view all new information as a sort of direct commentary on who they are.

This system persists because it is profitable. As Tim Wu writes in The Attention Merchants, commerce has been slowly permeating human existence – entering our city streets in the nineteenth century through billboards and posters, then our homes in the twentieth century through radio and TV. Now, in the twenty- first century, in what appears to be something of a final stage, commerce has filtered into our identities and relationships. We have generated billions of dollars for social media platforms through our desire – and then through a subsequent, escalating economic and cultural requirement – to replicate for the internet who we know, who we think we are, who we want to be.

Selfhood buckles under the weight of this commercial importance. In physical spaces, there’s a limited audience and time span for every performance. Online, your audience can hypothetically keep expanding forever, and the performance never has to end. (You can essentially be on a job interview in perpetuity.) In real life, the success or failure of each individual performance often plays out in the form of concrete, physical action – you get invited over for dinner, or you lose the friendship, or you get the job. On-line, performance is mostly arrested in the nebulous realm of sentiment, through an unbroken stream of hearts and likes and eyeballs, aggregated in numbers attached to your name. Worst of all, there’s essentially no backstage on the internet; where the off-line audience necessarily empties out and changes over, the online audience never has to leave. The version of you that posts memes and selfies for your precal classmates might end up sparring with the Trump administration after a school shooting, as happened to the Parkland kids – some of whom became so famous that they will never be allowed to drop the veneer of performance again. The self that traded jokes with white supremacists on Twitter is the self that might get hired, and then fired, by The New York Times, as happened to Quinn Norton in 2018. (Or, in the case of Sarah Jeong, the self that made jokes about white people might get Gamergated after being hired at the Times a few months thereafter.) People who maintain a public internet profile are building a self that can be viewed simultaneously by their mom, their boss, their potential future bosses, their eleven-year-old nephew, their past and future sex partners, their relatives who loathe their politics, as well as anyone who cares to look for any possible reason. Identity, according to Goffman, is a series of claims and promises. On the internet, a highly functional person is one who can promise everything to an indefinitely increasing audience at all times.

Incidents like Gamergate are partly a response to these conditions of hyper-visibility. The rise of trolling, and its ethos of disrespect and anonymity, has been so forceful in part because the internet’s insistence on consistent, approval-worthy identity is so strong. In particular, the misogyny embedded in trolling reflects the way women – who, as John Berger wrote, have always been required to maintain an external awareness of their own identity – often navigate these online conditions so profitably. It’s the self-calibration that I learned as a girl, as a woman, that has helped me capitalize on “having” to be online. My only experience of the world has been one in which personal appeal is paramount and self-exposure is encouraged; this legitimately unfortunate paradigm, inhabited first by women and now generalized to the entire internet, is what trolls loathe and actively repudiate. They destabilize an internet built on transparency and likability. They pull us back toward the chaotic and the unknown.

Of course, there are many better ways of making the argument against hyper-visibility than trolling. As Werner Herzog told GQ, in 2011, speaking about psychoanalysis: “We have to have our dark corners and the unexplained. We will become uninhabitable in a way an apartment will become uninhabitable if you illuminate every single dark corner and under the table and wherever – you cannot live in a house like this anymore.”

Leave a comment